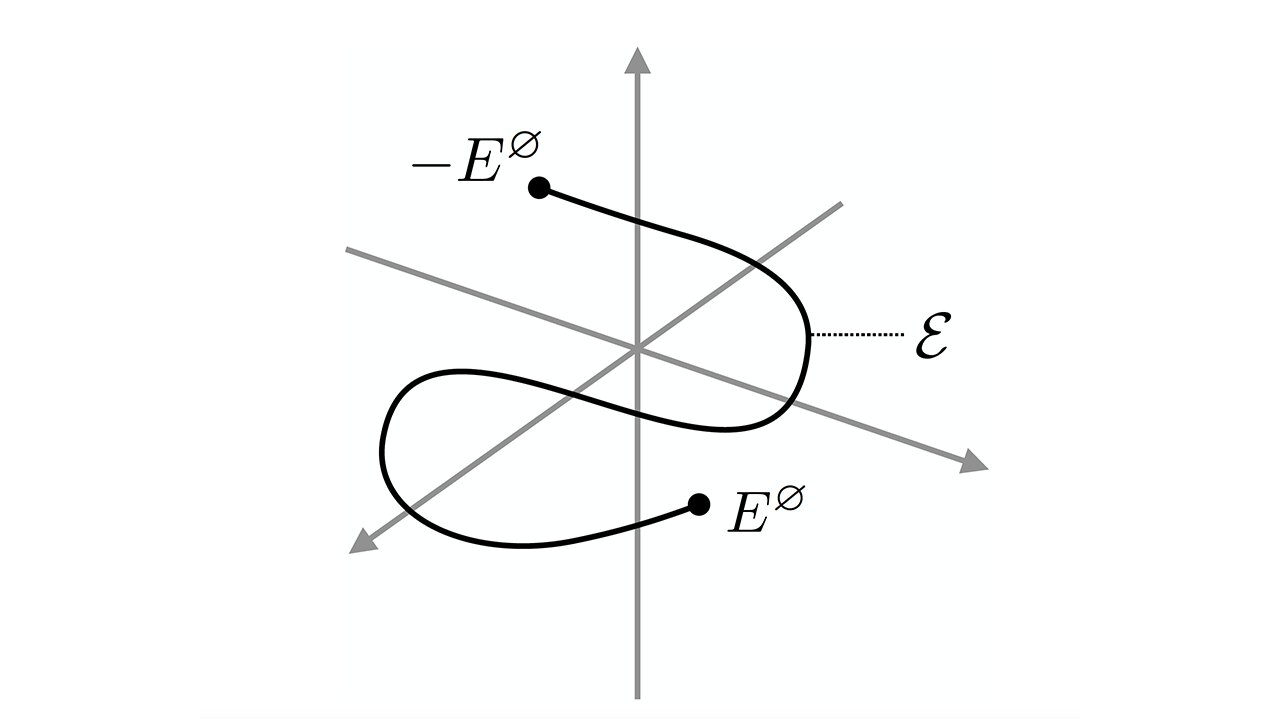

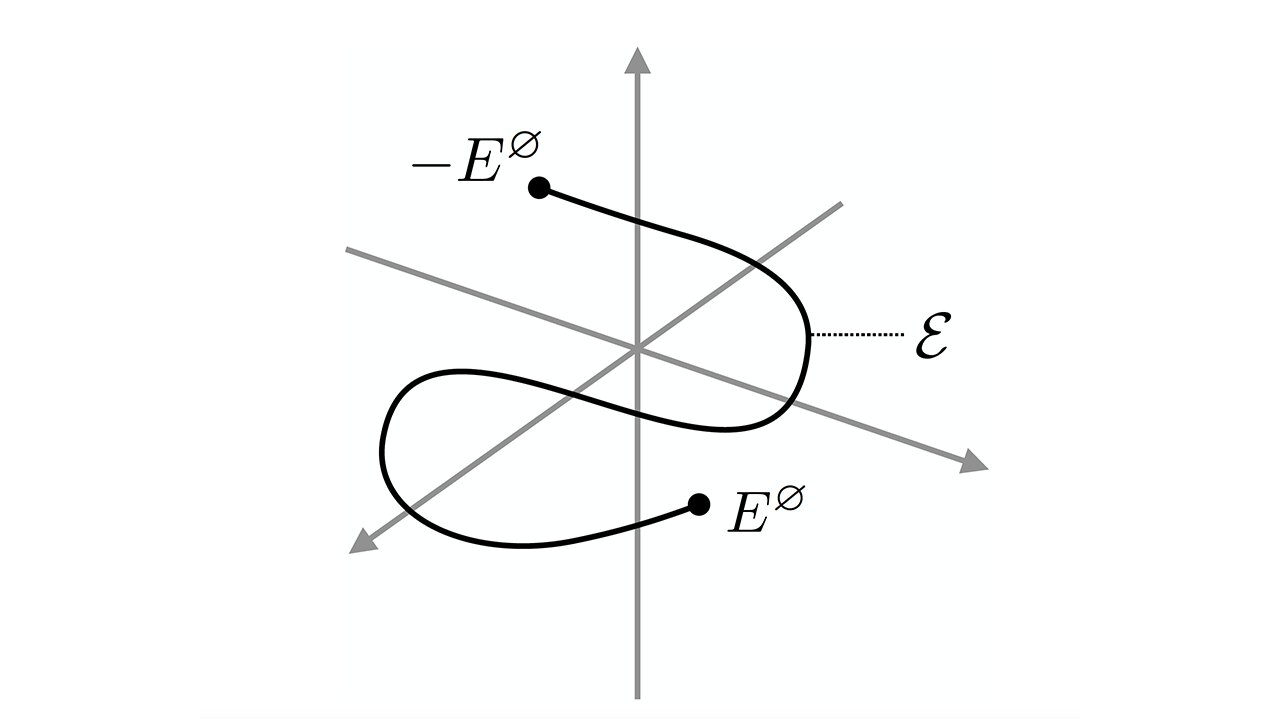

Figure 3 is from the paper "Entropy production given restrictions on the energy functions." Credit: Santa Fe Institute

The second law of thermodynamics states that the total entropy in a closed process may increase or decrease, but not both. For example, the second law states that while an egg may bounce off a table, it will not spontaneously make an egg and jump back onto the table. The second law states that air can escape from a balloon, but it will never inflate itself. Since at least 1904, physicists have studied the role of entropy within information theory. They study the energy transactions that add or erase bits from computers.

David Wolpert is a resident faculty member at SFI and has been researching the thermodynamics in computation for many years. He's been working with Artemy Kolchinsky (physicist, former SFI postdoctoral fellow) to understand the relationship between thermodynamics, information processing, and computation. The latest research on the topic was published in Physical Review E. It focuses on applying these ideas to a broad range of quantum and classical areas, including quantum thermodynamics.

Wolpert says, "Computing systems were designed to lose information about the past as they evolve."

The computer will output the answer if a person enters "2+2" in a calculator. The machine also loses information about the input, as not only can 2+2 but also 3+1 (and many other pairs of numbers) produce the same output. The machine cannot tell which pair of numbers was used as input from the only answer. Rolf Landauer, an IBM physicist, discovered in 1961 that information can be erased during calculations. This means that the environment's entropy must increase.

Kolchinsky says, "If you erase some information, you must generate a little heat."

Kolchinsky and Wolpert wanted to find out: What is the energy cost of erasing data for a system? Landauer devised an equation to calculate the energy required for erasure. However, the SFI duo discovered that many systems produce more energy than Landauer. Kolchinsky says that there is a cost that seems to go beyond Landauer’s bound.

Landauer says that the only way to minimize energy loss is to design a computer to perform a specific task. The computer will create additional entropy if it performs another calculation. Kolchinsky, Wolpert and others have shown that two computers can perform the same calculation but produce different entropy due to their expectations about inputs. This is called a "mismatch price," or the cost to be wrong.

Kolchinsky says, "It's cost between what the machine was built for and what it will be used for."

The duo have shown that mismatch cost can be studied in many systems. Not only is it a phenomenon in information theory, but also in biology and physics. They have discovered a fundamental relationship between thermodynamic irreversibility (case in which entropy rises) and logical irreversibility (case in computation where the initial state is lost). They have strengthened the second law in thermodynamics.

Kolchinsky & Wolpert have just published their latest paper, which shows that this fundamental relationship is more extensive than they thought. This includes the thermodynamics and quantum computers. The quantum computer's information is susceptible to errors or loss due to statistical fluctuations and quantum noise. This is why physicists continue to search for new ways to correct them. Kolchinsky believes that a better understanding about mismatch cost could help to predict and correct these errors.

Kolchinksy says, "There is a deep relationship between the Physics and Information Theory."

Further explore Thermodynamics and computation: A search to determine the cost of operating a Turing machine

Additional information: Artemy Kolchinsky and colleagues, Entropy production given energy function constraints, Physical Review E (2021). Information from the Journal: Physical Review E Artemy Kolchinsky and colleagues, Entropy production given limitations on the energy functions. (2021). DOI: 10.1103/PhysRevE.104.034129