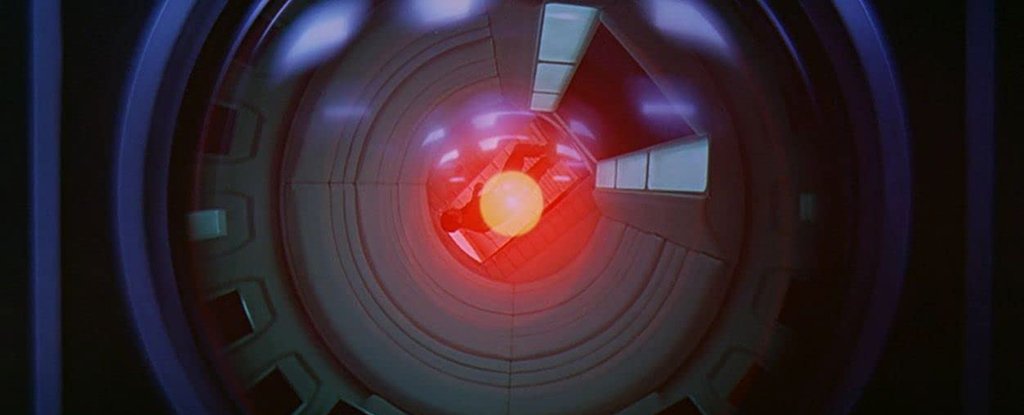

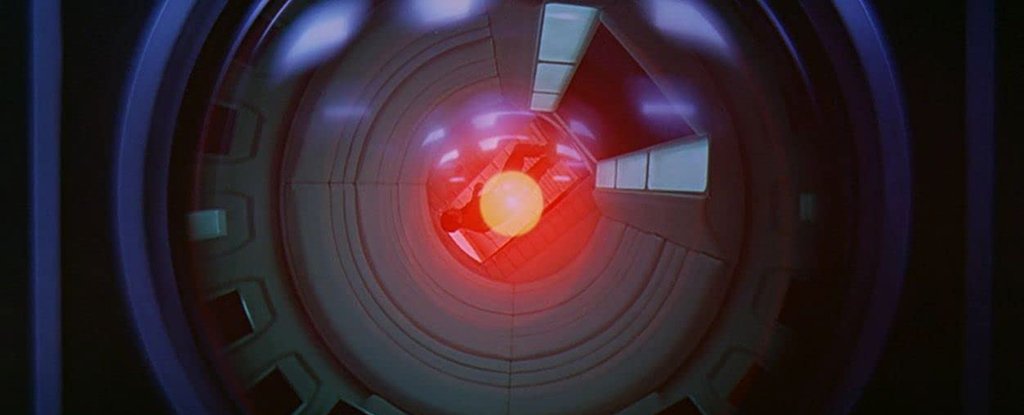

Artificial intelligence has been a topic of discussion for decades. Scientists gave their verdict in January 2021 on whether it was possible to control high-level computers super-intelligently. The answer is: Almost definitely not.

But controlling super-intelligence beyond human comprehension would require simulations of that superintelligence that we can analyze. It's not possible to create a simulation if we don't understand it.

The authors of the paper 2021 suggest that rules such as "cause no harm for humans" cannot be established if we don’t fully understand the types of scenarios an AI will come up with. We cannot set limits once a computer system works at a level beyond the capabilities of our programmers.

Researchers wrote that a super-intelligence presents a fundamentally new problem than the ones typically studied under the umbrella of "robot ethics".

"This is because superintelligence can mobilize a variety of resources to accomplish objectives that are possibly incomprehensible to humans.

The 1936 halting problem, which was first proposed by Alan Turing, is part of the team's reasoning. This problem involves determining whether a computer program can reach a conclusion (so it stops) or continue looping forever in search of one.

Turing demonstrated this through smart math. While we know what works for certain programs, it is logically impossible for us to know the same for all possible programs. This brings us back to AI. AI could theoretically hold all possible computer programs in its memory in superintelligent states.

For example, a program to stop AI from harming people and destroying the planet may come to a conclusion (and even halt), or it might not. This means that it is not controllable.

"In effect this renders the containment algorithm inoperable," stated Iyad Rahwan (computer scientist, Max-Planck Institute for Human Development, Germany) back in January.

Researchers suggest that instead of teaching AI ethics and telling it to not destroy the world, which no algorithm can be certain of doing, they limit the super-intelligence's capabilities. For example, it could be disconnected from certain networks or parts of the internet.

This idea was also rejected by a recent study. It suggested that artificial intelligence would be limited in its reach. The argument is that if it's not going to solve problems beyond humans' scope, why make it?

Artificial intelligence is a very complex topic. We might not be able to predict when an uncontrollable super-intelligence will arrive. This means that we must start asking serious questions about where we are going.

Manuel Cebrian, a computer scientist at the Max-Planck Institute for Human Development, said that a super-intelligent robot that can control the world sounds like science-fiction. "But, there are machines that can perform important tasks without the need for programmers.

"The question is, therefore, whether this could at one point become uncontrollable or dangerous for humanity."

The journal Journal of Artificial Intelligence Research published the research.

This article was published for the first time in January 2021.