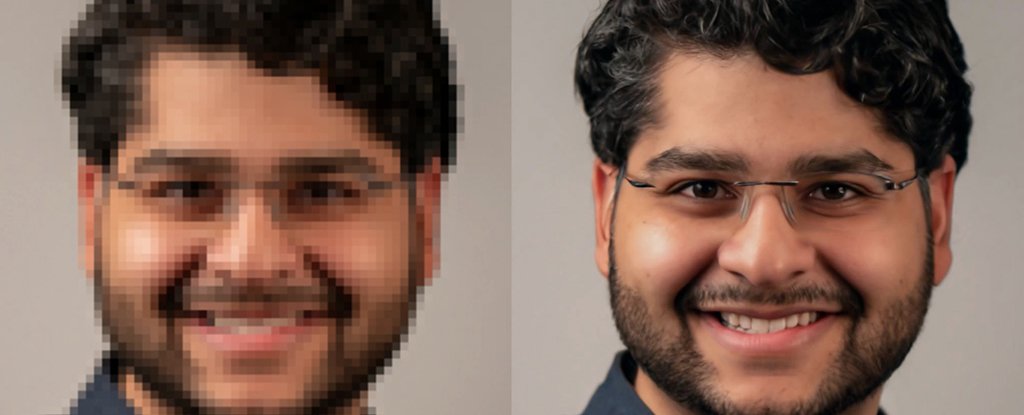

Perhaps you've seen sci-fi movies and television shows in which the protagonist zooms in on an image to reveal a face or number plate. Google's new artificial intelligence engines are based on diffusion models and can do this exact trick.

This is a complex process that can be difficult to master. Essentially, the camera adds picture details that it didn't capture originally. It does this by using super smart guesswork based upon similar images.

Google calls this natural image synthesis. In this instance, it is image super-resolution. It starts with a blocky, pixelated image and ends up with something sharp and clear that looks natural. Although it may not be exact, it is close enough to match the original to appear real to human eyes.

(Google Research).

Google actually has two new AI tools. The first, called SR3, is a Super-Resolution via Repeated Refinement. It adds noise to an image, then reverses the process and takes it away. This works much like an image editor might do to sharpen your vacation snaps.

"Diffusion models work in a way that corrupts the training data by gradually adding Gaussian noise to the data, slowly wiping away details until it becomes pure noise and then training an neural network to reverse this corruption," explained Jonathan Ho, research scientist at Google Research, and Chitwan Saharia, software engineer at Google Research.

SR3 was able to imagine what a full-resolution version looks like using a combination of machine learning magic and a large database of images. The paper Google posted on arXiv has more information.

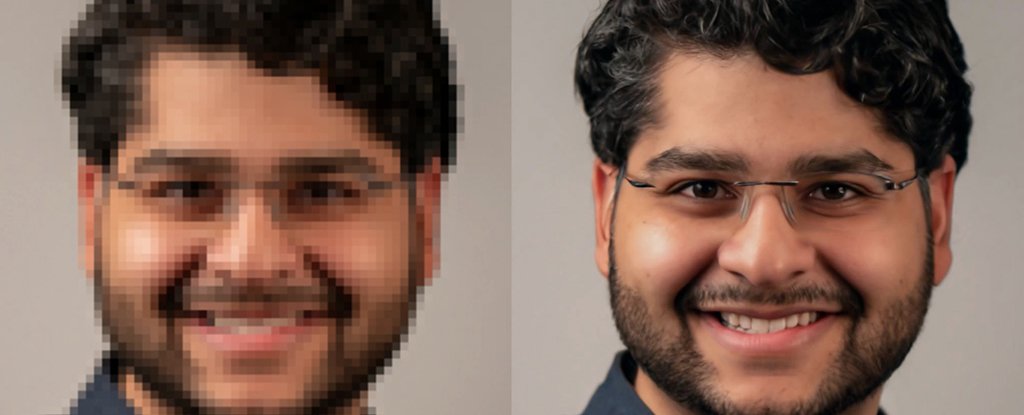

CDM (or Cascaded Diffusion Models) is the second tool. These are described by Google as "pipelines" that allow diffusion models, including SR3, to be directed in high-quality ways for image resolution enhancements. Google published a paper about this.

CDM in action. (Google Research).

Google claims that the CDM approach beats other methods of upsizing images by using different enhancement models at different resolutions. ImageNet is a huge database of images that are used to train AI engines for visual object recognition research.

The final results of SR3/CDM are amazing. A standard test using 50 volunteers showed that SR3-generated images were mistaken for real photographs around 50% of the time. This is impressive considering that a perfect algorithm would have a score of at least 50 percent.

It is worth noting that these enhanced images don't exactly match the originals. However, they are carefully calculated simulations based upon advanced probability maths.

Google claims that the diffusion approach yields better results than other options such as generative adversarial network (GANs), which pit two neural networks against one another to refine results.

(Google Research).

Google promises much more with its new AI engines, and associated technologies, not only in terms of scaling images of faces and natural objects but also in other areas such as probability modeling.

The team says that they are eager to test diffusion models for a variety of generative modeling issues.