The popular image synthesis model Stable Diffusion can be used to compress existing bitmapped images with less visual artifacts than other compression methods.

Stable Diffusion is an artificial intelligence model that uses text descriptions to generate images. The model studied millions of images from the internet. During the training process, the model makes statistical associations between images and related words, making a much smaller representation of key information about each image and storing them as weights.

When Stable Diffusion analyzes and "compresses" images into weight form, they reside in what researchers call "latent space," which is a way of saying that they exist as a sort of fuzzy potential that can be realized into images. The weights file is less than 4 gigabytes, but it contains knowledge about hundreds of millions of images.

Bhlmann forced his images through Stable Diffusion's image encoder process, which takes a low-precision 512512 image and turns it into a higher-precision 6464 latent space representation, rather than using text prompt. At this point, the image can be expanded back into a 512 512 image with good results.

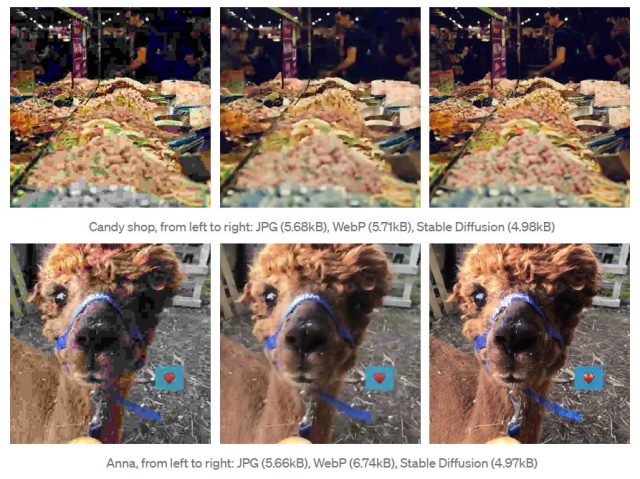

AdvertisementWhile running tests, Bhlmann found that a novel image compressed with Stable Diffusion looked better than larger files. He shows a photo of a llama that has been compressed down to 5.68KB using a number of different methods. The Stable Diffusion image seems to have more clarity and less compression artifacts than other formats.

It's not good with faces or text, and it can actually hallucinate features that weren't present in the source image. You don't want your image compressor to invent details in an image that isn't there. Extra decoding time is required and a Stable Diffusion weights file is needed.

The use of Stable Diffusion is more of a hack than a practical solution, but it could point to a future use of image synthesis models. There are more technical details about Bhlmann's experiment in his post on Towards Artificial Intelligence.